β can either be a constant defined prior to the training or a parameter that can trained during the training time. It is a relatively simple function: it is the multiplication of the input x with the sigmoid function for x - and it looks as.

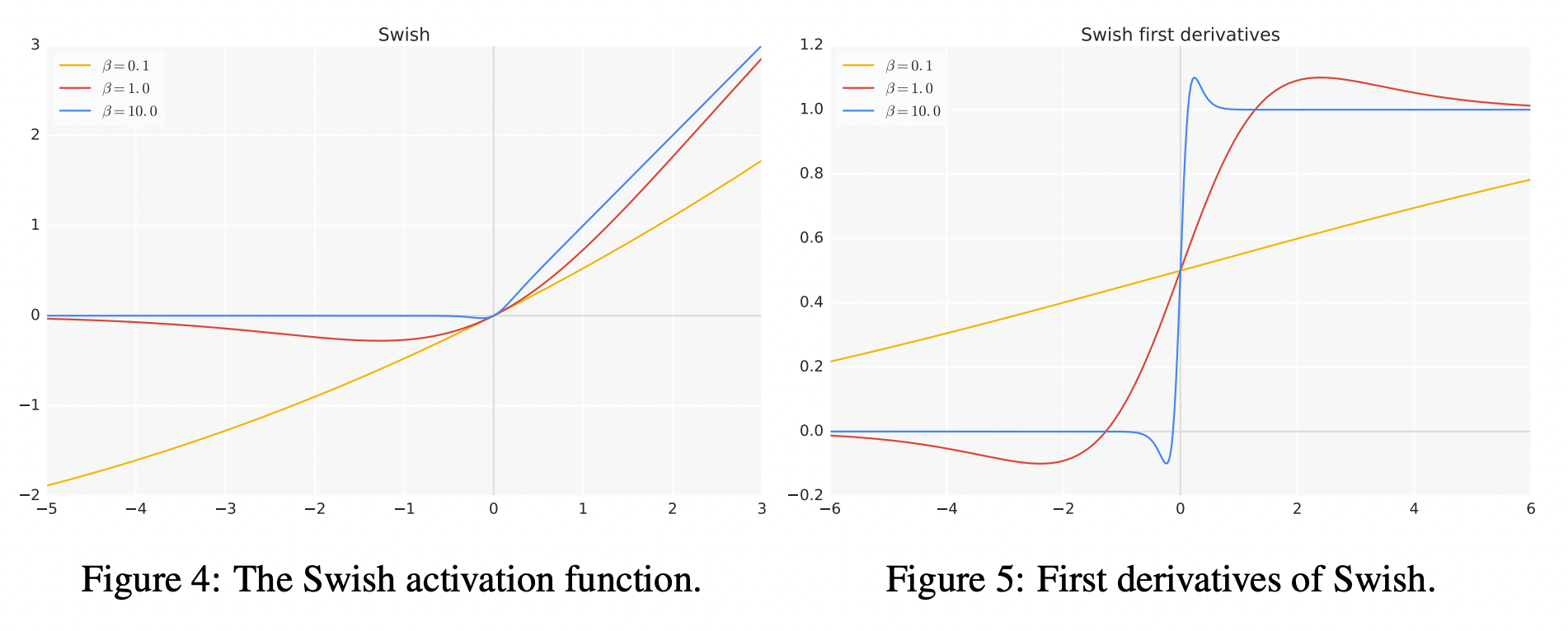

Le from Google Brain proposed the Swish activation function. In October 2017, Prajit Ramachandran, Barret Zoph and Quoc V. Where σ(x) = 1/(1+exp(-x)), is the sigmoid function. Nevertheless, it does not mean that it cannot be improved. They are as computationally efficient as ReLU functions, but tend to perform better on. Similarly for large enough negative values, the value of σ(x) becomes approximately equal to 0, and hence the values of swish function become approximately equal to 0. Our experiments show that E-swish systematically outperforms any other well-known activation function, providing not only a better overall accuracy than both. The Swish activation function was discovered by researchers at Google. Swish, Mish and Serf belong to the same fam-ily of activation functions possessing self-gating property.Like Mish, Serf also possess a pre-conditioner which resultsin better optimization and thus enhanced performance. Therefore, it is necessary to combine several traditional activation functions to construct a new activation function to make up for the shortcomings. Various traditional activation functions proposed in the early days are used widely but still in a limited manner. The reason it looks a lot like ReLU is because for large enough values, σ(x) becomes approximately equal to 1, and hence values of swish activation function become approximately equal to x. We dene Serfasf(x) xerf(ln(1 +ex))whereerfis the error func-tion (1998). Activation functions play an important role in deep learning. But unlike ReLU however it is differentiable at all points and is non-monotonic. The shape of Swish Activation Function looks similar to ReLU, for being unbounded above 0 and bounded below it. For inputs less than around 1:25, the derivative has a magnitude less than 1. The derivative of APTx also has lesser operations than MISH, hence making neural networks train faster compared to MISH activation function. Swish Activation Function is continuous at all points. The derivative of Swish is f0(x) (x)+x(x)(1 (x)) (x)+x(x) x(x)2 x(x)+(x)(1 x(x)) f(x)+(x)(1 f(x)) The rst and second derivatives of Swish are shown in Figure 2. The authors of the research paper first proposing the Swish Activation Function found that it outperforms ReLU and its variants such as Parameterized ReLU(PReLU), Leaky ReLU(LReLU), Softplus, Exponential Linear Unit(ELU), Scaled Exponential Linear Unit(SELU) and Gaussian Error Linear Units(GELU) on a variety of datasets such as the ImageNet and CIFAR Dataset when applied to pre-trained models. Swish is one of the new activation functions which was first proposed in 2017 by using a combination of exhaustive and reinforcement learning-based search. Below is the image of the Tanh activation function and its derivative. The choice of activation function is very important and can greatly influence the accuracy and training time of a model. Moreover, the derivatives of Softplus, GELU, SiLU, Swish, and Mish are also.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed